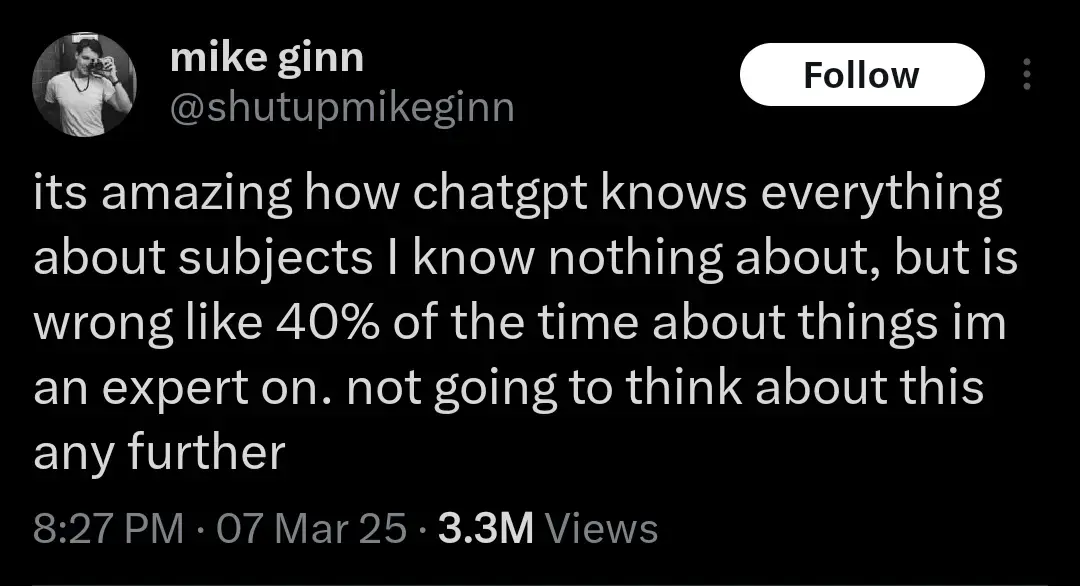

Another realization might be that the humans whose output ChatGPT was trained on were probably already 40% wrong about everything. But let’s not think about that either. AI Bad!

This is a salient point that’s well worth discussing. We should not be training large language models on any supposedly factual information that people put out. It’s super easy to call out a bad research study and have it retracted. But you can’t just explain to an AI that that study was wrong, you have to completely retrain it every time. Exacerbating this issue is the way that people tend to view large language models as somehow objective describers of reality, because they’re synthetic and emotionless. In truth, an AI holds exactly the same biases as the people who put together the data it was trained on.

I’ll bait. Let’s think:

-there are three humans who are 98% right about what they say, and where they know they might be wrong, they indicate it

-

now there is an llm (fuck capitalization, I hate the ways they are shoved everywhere that much) trained on their output

-

now llm is asked about the topic and computes the answer string

By definition that answer string can contain all the probably-wrong things without proper indicators (“might”, “under such and such circumstances” etc)

If you want to say 40% wrong llm means 40% wrong sources, prove me wrong

It’s more up to you to prove that a hypothetical edge case you dreamed up is more likely than what happens in a normal bell curve. Given the size of typical LLM data this seems futile, but if that’s how you want to spend your time, hey knock yourself out.

Lol. Be my guest and knock yourself out, dreaming you know things

-

The quote was originally on news and journalists.

The phenomenon is called Gell-Mann amnesia

What the fuck is vibe coding… Whatever it is I hate it already.

Using AI to hack together code without truly understanding what your doing

Andrej Karpathy (One of the founders of OpenAI, left OpenAI, worked for Tesla back in 2015-2017, worked for OpenAI a bit more, and is now working on his startup “Eureka Labs - we are building a new kind of school that is AI native”) make a tweet defining the term:

There’s a new kind of coding I call “vibe coding”, where you fully give in to the vibes, embrace exponentials, and forget that the code even exists. It’s possible because the LLMs (e.g. Cursor Composer w Sonnet) are getting too good. Also I just talk to Composer with SuperWhisper so I barely even touch the keyboard. I ask for the dumbest things like “decrease the padding on the sidebar by half” because I’m too lazy to find it. I “Accept All” always, I don’t read the diffs anymore. When I get error messages I just copy paste them in with no comment, usually that fixes it. The code grows beyond my usual comprehension, I’d have to really read through it for a while. Sometimes the LLMs can’t fix a bug so I just work around it or ask for random changes until it goes away. It’s not too bad for throwaway weekend projects, but still quite amusing. I’m building a project or webapp, but it’s not really coding - I just see stuff, say stuff, run stuff, and copy paste stuff, and it mostly works.

People ignore the “It’s not too bad for throwaway weekend projects”, and try to use this style of coding to create “production-grade” code… Lets just say it’s not going well.

source (xcancel link)

Its too bad that some people seem to not comprehend all chatgpt is doing is word prediction. All it knows is which next word fits best based on the words before it. To call it AI is an insult to AI… we used to call OCR AI, now we know better.